Just upgraded to Mojave? Getting the 🚫 of doom when you try to boot your Mac? Worried you’ve lost all your data? Don’t panic! Your Mac is recoverable, and this guide will help you get your drive back in shape.

This bug is caused by a new limitation introduced for FileVault encryption. As mentioned in the release notes, any APFS volume encrypted using Mojave’s implementation of FileVault becomes invisible to earlier versions of macOS until the encryption or decryption process completes. By itself, this is mostly harmless. However, this is rendered much worse by a bug1 that causes the Mojave installer to incorrectly trigger reencryption of an already-encrypted FileVault volume during the install process. Because full drive encryption cannot complete in a timely manner, the Mojave installer times out before encryption completes. The result is a macOS 10.13 (or older) install with a macOS 10.14 FileVault volume that hasn’t yet finished encrypting. This volume can’t be read by the startup manager, and so becomes unbootable.

In order to fix this issue, we’ll need to let your drive finish encrypting. This guide will walk you through the process. Before we get started, make sure you have the right supplies on hand. You’ll need a USB key with a capacity of at least 8GB and access to a working Mac.

1. Create a USB Mojave installer

Download the Mojave installer application on your working Mac using the App Store. Don’t worry, you won’t be using that to install - just to create a USB installer for your broken Mac. Then follow these instructions from Apple. This will erase your USB key and turn it into a bootable USB install drive.

2. Boot into the USB installer

Turn off the Mac that you’re upgrading to Mojave and insert the USB drive you just created. Turn the Mac back on, and immediately hold the option key until the Startup Manager appears. Select the drive you created.

3. Enter Recovery Mode

The installer should provide an option to enter Recovery Mode. This is a different version of Recovery Mode than the one that’s builtin to your Mac, and it will be able to see your hard drive.

4. Unlock and mount your hard drive

From the Utilities menu bar at the top of the screen, select Terminal. From the terminal prompt, type diskutil apfs list. In the output, you should see one volume whose FileVault status is listed as FileVault: in progress (or something similar). If it’s already listed as “unlocked”, then you’re good - proceed to step 5.

Unlock this drive by running diskutil apfs unlockVolume DISK, where DISK is the identifier listed after APFS Volume Disk for the volume you identified in the previous paragraph. For example, you might type diskutil apfs unlockVolume disk1s1. This command will ask you for a password; enter either your personal log in password, or a FileVault recovery key.

Once you’ve completed this step, exit Terminal. You should be returned to the Recovery Mode main menu.

5. Create a new APFS volume

Open Disk Utility from the Recovery Mode main menu. Select your main hard drive, right-click it, and choose “Add APFS Volume”. Don’t worry, this isn’t repartitioning your drive - this bootable volume will live on the same volume as your main volume, and you will be able to delete it later. Name it “Temporary”, then click “Add”.

Once you’ve completed this step, exit Disk Utility. You should be returned to the Recovery Mode main menu. Return to the main Mojave installer menu.

6. Install Mojave on the new volume

Choose to install Mojave on the volume named “Temporary” that you created in the previous step. This will be a temporary fresh install, so don’t worry too much about it - go ahead and keep all the options at their defaults.

7. Allow drive encryption to finish

Once your install has finished and you’ve logged in, open Disk Utility. Unlock your normal hard drive by clicking on it and clicking the “mount” button in the toolbar. You will be asked for a password; like in the previous step in the terminal, you will need to provide either your login password (from your old Mac install), or your recovery key.

At this point, drive encryption will start back up again. You will need to wait for that to finish; this could take a few hours. If you want to check on the progress, you can open a terminal and look at the output of diskutil apfs list; encryption is finished when you run that command and see the status read FileVault: Yes.

8. Upgrade your main drive to Mojave

Now you’re finally (finally!) ready to let the Mojave install complete. Open up your USB drive in the Finder and run the macOS installer app. This time, instead of picking the temporary volume, pick your original Mac volume. Let the installer complete. This time, your Mac should boot up like normal. You’re saved!

9. Clean up

Now that your main Mac volume is bootable again, you can get rid of the temporary volume we created in the previous step. To do that, open Disk Utility and right-click the volume named “Temporary”. Click “Delete APFS Volume”, then confirm by clicking “Delete” again.

You’re done!

With any luck, you should be all set at this point. Feel free to reach out to me if you’re still having trouble! Big thanks to @mikeymikey for the suggestion of using a second APFS volume to install the temporary macOS 10.14. Thanks also to my manager, who allowed me to use my internal blog post as the basis for this post.

-

I wish I could provide more detail about this bug, but as far as I know no details have been published. I’ve filed a radar but haven’t received a response. All I know is that this occurs, seemingly at random, to a small percentage of people who try to upgrade from macOS 10.13.6 to macOS 10.14 when FileVault is already enabled.↩

First, to start with the good

First, to start with the good  Unfortunately the quality of the sleeve itself is subpar. The cardboard feels thin and flimsy compared to other double-albums I own; it lacks weight. The gatefold hinge is also poorly-folded, and doesn’t stay closed on its own when the records are in; it flops open awkwardly. The records also don’t slide comfortably into the sleeve when the gatefold is closed, which makes putting records back after a play more awkward than it has to be. (See right: the record is inserted as far as it gets when the gatefold is closed. The black thing poking out is the inner record sleeve.) If you open the sleeve to insert the records all the way, then they can’t be removed while the gatefold is closed, which is even more awkward. The overall feeling is surprisingly cheap for a $35 album.

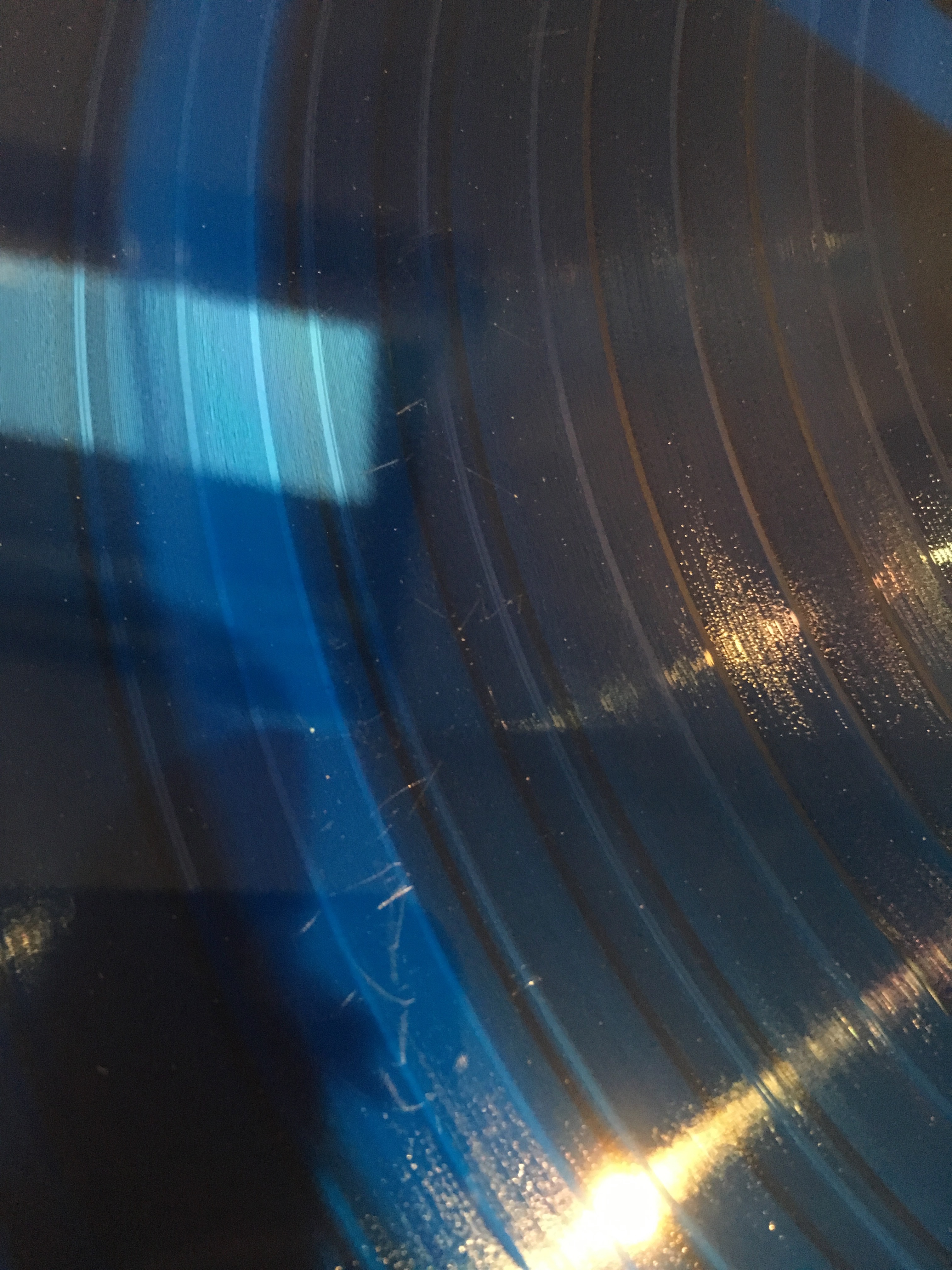

Unfortunately the quality of the sleeve itself is subpar. The cardboard feels thin and flimsy compared to other double-albums I own; it lacks weight. The gatefold hinge is also poorly-folded, and doesn’t stay closed on its own when the records are in; it flops open awkwardly. The records also don’t slide comfortably into the sleeve when the gatefold is closed, which makes putting records back after a play more awkward than it has to be. (See right: the record is inserted as far as it gets when the gatefold is closed. The black thing poking out is the inner record sleeve.) If you open the sleeve to insert the records all the way, then they can’t be removed while the gatefold is closed, which is even more awkward. The overall feeling is surprisingly cheap for a $35 album. Unfortunately, my copy shipped with large scratches on sides A and B. (See the photo on the left—the scratches are very visible at full size.) Both discs were also covered in dust fresh out of the package. Everything I’ve seen suggests this isn’t an isolated issue—I know other people whose iam8bit records shipped with scratches before the first play, and from scanning their twitter feed, it looks like this is a common complaint with other customers. Needless to say, this is a huge issue, and I’m shocked it’s as common a problem as it is with them.

Unfortunately, my copy shipped with large scratches on sides A and B. (See the photo on the left—the scratches are very visible at full size.) Both discs were also covered in dust fresh out of the package. Everything I’ve seen suggests this isn’t an isolated issue—I know other people whose iam8bit records shipped with scratches before the first play, and from scanning their twitter feed, it looks like this is a common complaint with other customers. Needless to say, this is a huge issue, and I’m shocked it’s as common a problem as it is with them.

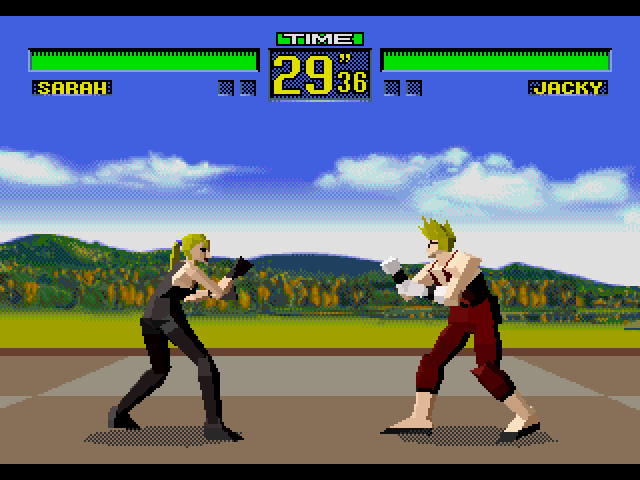

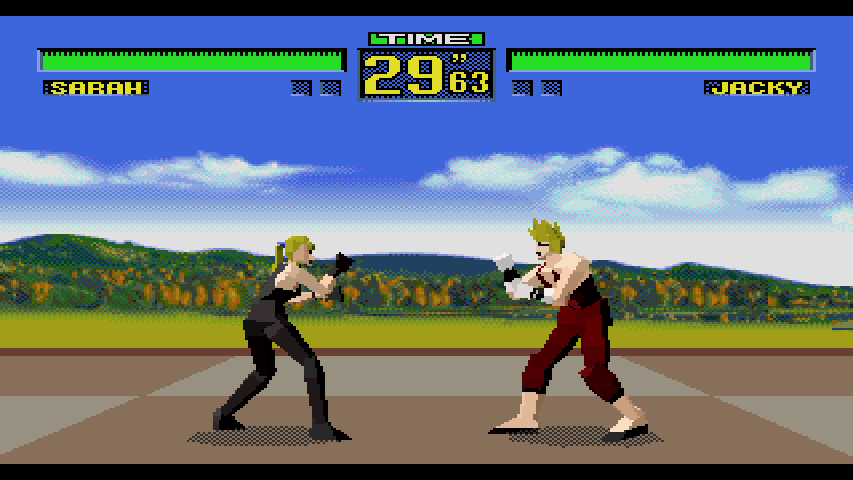

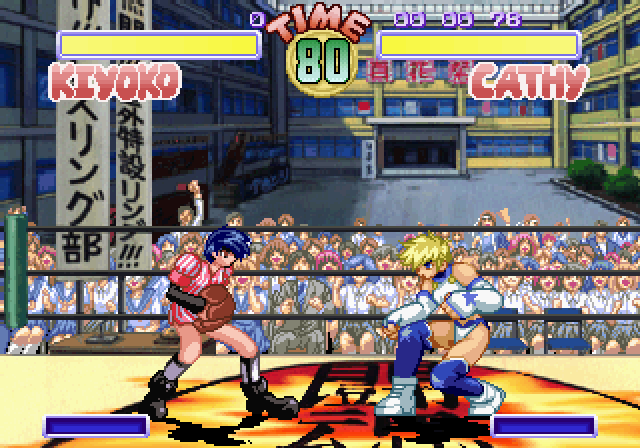

In 1999, an unofficial mod titled

In 1999, an unofficial mod titled

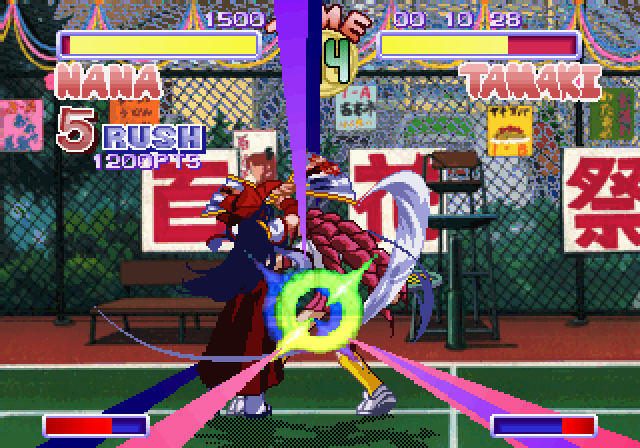

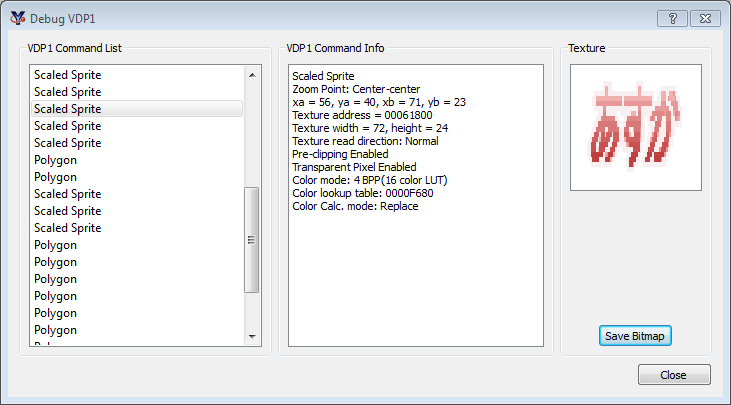

Via trial and error, and with help from information gleaned via the

Via trial and error, and with help from information gleaned via the